SSD Acceleration: Difference between revisions

No edit summary |

No edit summary |

||

| (4 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

{{Project | {{Project | ||

|Name=SSD Acceleration test | |Name=SSD Acceleration test | ||

| Line 200: | Line 198: | ||

For the oncoming [[downscaling]] of the server infra, we needed a new target. The question of SSD cache came up, but was discarded as not-for-production-yet. Never the less, i tested it's performance; | For the oncoming [[downscaling]] of the server infra, we needed a new target. The question of SSD cache came up, but was discarded as not-for-production-yet. Never the less, i tested it's performance; | ||

* https://bcache.evilpiepirate.org/ | |||

=== setup === | === setup === | ||

| Line 240: | Line 240: | ||

I setup the full ssd (/dev/sdb) as cache device for the backingstore (/dev/sda5). I also enable writeback and discard by default. | I setup the full ssd (/dev/sdb) as cache device for the backingstore (/dev/sda5). I also enable writeback and discard by default. | ||

apt install bcache-tools | |||

make-bcache -C /dev/sdb -B /dev/sda5 --discard --writeback | make-bcache -C /dev/sdb -B /dev/sda5 --discard --writeback | ||

mkfs.ext4 /dev/bcache0 | mkfs.ext4 /dev/bcache0 | ||

| Line 249: | Line 250: | ||

* read, 162.114MB/s, 2.716ms, 20750 IOps | * read, 162.114MB/s, 2.716ms, 20750 IOps | ||

* write, 35.676MB/s, 13.936ms, 4566 IOps | * write, 35.676MB/s, 13.936ms, 4566 IOps | ||

and with writethrough ; | |||

* randread, 160.338MB/s, 2.795ms, 20523 IOps | |||

* randrw, 1.302MB/s, 226.778ms, 166 IOps | |||

* read, 146.139MB/s, 3.089ms, 18705 IOps | |||

* write, 7.118MB/s, 63.971ms, 911 IOps | |||

Full results here ; [[SSD Acceleration/Toshiba MQ01ABD100 with bcache]] | Full results here ; [[SSD Acceleration/Toshiba MQ01ABD100 with bcache]] | ||

== attempt 3 == | |||

This setup is the current running [[erratic]] setup. | |||

These tests came from crystaldiskmark 8.0.4 on a kvm running on the machine with disk caching set to 'default(no cache)'. | |||

<gallery> | |||

Image:hddstorage-0bcache.PNG | |||

Image:hddstorage-120gb-bcache.PNG | |||

Image:hddstorage-raid0nvme-bcache.PNG | |||

</gallery> | |||

Latest revision as of 21:33, 10 April 2022

| SSD Acceleration test | |

|---|---|

| Participants | |

| Skills | Linux |

| Status | Active |

| Niche | Software |

| Purpose | Infra |

| Tool | No |

| Location | Server room |

| Cost | 0 |

| Tool category | |

ssdnow.jpg {{#if:No | [[Tool Owner::{{{ProjectParticipants}}} | }} {{#if:No | [[Tool Cost::0 | }}

In preparation of building a new storage environment, I wanted to see if SSD acceleration would help improve IOPS and throughput on a classic HDD array.

attempts

attempt 1

In preparation for this, I put together the following machine: An ASUS P5S800-VM with:

- 1Gb RAM

- 1x Intel(R) Celeron(R) CPU 2.66GHz

- 1x Hitachi Deskstar IC35L120AVV207 'boot' disk

- IDE

- 241254720 sectors @ 4096 bits/s (115 Gb)

- 1x Hitachi HDT721010SLA360 'storage' disk

- SATA

- 1953525168 sectors @ 4096 bits/s (931.5 Gb)

- 1x Kingston SV300S37A120G 'caching' SSD

- SATA

- 234441648 sectors @ 4096 bits/s (111.7 Gb)

Using fio and https://github.com/tsaikd/fio-wrapper , I have tested the raw MB/s and IOPS for each device, using the default 32Mb file size: (Graphs coming soon)

- Boot:

- randread, 64 users, 8K blocks: 1.7 MB/s , 288 ms latency, 219 IOPS

- randrw, 64 users, 8K blocks: 0.68 MB/s , 385 ms latency, 86 IOPS

- read, 64 users, 8K blocks: 32 MB/s , 15 ms latency, 4207 IOPS

- write, 64 users, 8K blocks: 20 MB/s , 24 ms latency, 2569 IOPS

- Storage:

- randread, 64 users, 8K blocks: 1.7 MB/s , 283 ms latency, 222 IOPS

- randrw, 64 users, 8K blocks: 0.832 MB/s , 305 ms latency, 106 IOPS

- read, 64 users, 8K blocks: 34.2 MB/s , 14.5 ms latency, 4381 IOPS

- write, 64 users, 8K blocks: 17.4 MB/s , 27 ms latency, 2220 IOPS

- Caching:

- randread, 64 users, 8K blocks: 8 MB/s , 0.46 ms latency, 2060 IOPS

- randrw, 64 users, 8K blocks: 10 MB/s , 23.7 ms latency, 1348 IOPS

- read, 64 users, 8K blocks: 29.7 MB/s , 16.2 ms latency, 3811 IOPS

- write, 64 users, 8K blocks: 20.9 MB/s , 23.6 ms latency, 2683 IOPS

We can see the SSD provides about the same QOS for linear rw operations, but is significantly better at random rw than the spinning disks. This is not unexpected.

dmsetup cache

A write-back dmsetup cache system was constructed using the following configuration:

root@roger-wilco:~# dmsetup ls roger--wilco--vg-swap (254:2) roger--wilco--vg-root (254:0) ssd-metadata (254:3) ssd-blocks (254:4) cached-disk (254:6) vg0-spindle (254:5) root@roger-wilco:~# dmsetup table ssd-metadata 0 924000 linear 8:33 0 root@roger-wilco:~# dmsetup table ssd-blocks 0 233515600 linear 8:33 924000 root@roger-wilco:~# dmsetup table cached-disk 0 1953513472 cache 254:3 254:4 254:5 512 1 writeback default 0 root@roger-wilco:~# vgs VG #PV #LV #SN Attr VSize VFree roger-wilco-vg 1 3 0 wz--n- 114.80g 0 vg0 1 1 0 wz--n- 931.51g 0 root@roger-wilco:~# pvs PV VG Fmt Attr PSize PFree /dev/sda5 roger-wilco-vg lvm2 a-- 114.80g 0 /dev/sdb1 vg0 lvm2 a-- 931.51g 0 root@roger-wilco:~# lvs LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert home roger-wilco-vg -wi-ao---- 103.49g root roger-wilco-vg -wi-ao---- 9.31g swap roger-wilco-vg -wi-ao---- 2.00g spindle vg0 -wi-ao---- 931.51g

- Cached:

- randread, 64 users, 8K blocks: 26.7 MB/s , 18.5 ms latency, 3423 IOPS

- randrw, 64 users, 8K blocks: 5.36 MB/s , 46.6 ms latency, 686 IOPS

- read, 64 users, 8K blocks: 30.1 MB/s , 16.5 ms latency, 3857 IOPS

- write, 64 users, 8K blocks: 1 MB/s , 477 ms latency, 127 IOPS

With this setup, we see a tradeoff between linear write and random write. Linear write is significantly slowed for some reason - some issue with the writeback configuration?

- Cached (writethrough):

- randread, 64 users, 8K blocks: 0.8 MB/s , 598 ms latency, 102 IOPS

- randrw, 64 users, 8K blocks: 0.4 MB/s , 601 ms latency, 52 IOPS

- read, 64 users, 8K blocks: 30 MB/s , 16.5 ms latency, 3836 IOPS

- write, 64 users, 8K blocks: 9 MB/s , 53 ms latency, 1160 IOPS

NOPE

lvm cache

OK, so there's another means of building caching:

root@roger-wilco:~# vgextend vg0 /dev/sdc1 Physical volume "/dev/sdc1" successfully created Volume group "vg0" successfully extended

root@roger-wilco:~# pvs PV VG Fmt Attr PSize PFree /dev/sda5 roger-wilco-vg lvm2 a-- 114.80g 0 /dev/sdb1 vg0 lvm2 a-- 931.51g 0 /dev/sdc1 vg0 lvm2 a-- 111.79g 111.79g root@roger-wilco:~# lvs LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert home roger-wilco-vg -wi-ao---- 103.49g root roger-wilco-vg -wi-ao---- 9.31g swap roger-wilco-vg -wi-ao---- 2.00g spindle vg0 -wi-a----- 931.51g

root@roger-wilco:~# lvcreate -L 1G cache_meta vg0 /dev/sdc1 Volume group "cache_meta" not found root@roger-wilco:~# lvcreate -L 1G -n cache_meta vg0 /dev/sdc1 Logical volume "cache_meta" created root@roger-wilco:~# lvcreate -l 100%FREE -n cache vg0 /dev/sdc1 Logical volume "cache" created root@roger-wilco:~# lvs LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert home roger-wilco-vg -wi-ao---- 103.49g root roger-wilco-vg -wi-ao---- 9.31g swap roger-wilco-vg -wi-ao---- 2.00g cache vg0 -wi-a----- 110.79g cache_meta vg0 -wi-a----- 1.00g spindle vg0 -wi-a----- 931.51g root@roger-wilco:~# lvconvert --type cache-pool --poolmetadata vg0/cache_meta vg0/cache WARNING: Converting logical volume vg0/cache and vg0/cache_meta to pool's data and metadata volumes. THIS WILL DESTROY CONTENT OF LOGICAL VOLUME (filesystem etc.) Do you really want to convert vg0/cache and vg0/cache_meta? [y/n]: y Volume group "vg0" has insufficient free space (0 extents): 256 required. root@roger-wilco:~# lvconvert --type cache vg0/cache vg0/spindle --cache requires --cachepool. Run `lvconvert --help' for more information. root@roger-wilco:~# lvconvert --type cache --cachepool vg0/cache vg0/spindle WARNING: Converting logical volume vg0/cache to pool's data volume. THIS WILL DESTROY CONTENT OF LOGICAL VOLUME (filesystem etc.) Do you really want to convert vg0/cache? [y/n]: y Rounding up size to full physical extent 60.00 MiB Volume group "vg0" has insufficient free space (0 extents): 15 required.

(ER...)

root@roger-wilco:~# e2fsck -f /dev/mapper/vg0-spindle ... root@roger-wilco:~# resize2fs /dev/mapper/vg0-spindle 300G ... root@roger-wilco:~# lvreduce -L 500G vg0/spindle WARNING: Reducing active logical volume to 500.00 GiB THIS MAY DESTROY YOUR DATA (filesystem etc.) Do you really want to reduce spindle? [y/n]: y Size of logical volume vg0/spindle changed from 931.51 GiB (238466 extents) to 500.00 GiB (128000 extents). Logical volume spindle successfully resized root@roger-wilco:~# lvconvert --type cache --cachepool vg0/cache vg0/spindle WARNING: Converting logical volume vg0/cache to pool's data volume. THIS WILL DESTROY CONTENT OF LOGICAL VOLUME (filesystem etc.) Do you really want to convert vg0/cache? [y/n]: y Rounding up size to full physical extent 60.00 MiB Logical volume "lvol0" created Logical volume "lvol0" created Converted vg0/cache to cache pool. Logical volume vg0/spindle is now cached. root@roger-wilco:~# lvresize -L +100%FREE vg0/spindle Invalid argument for --size: +100%FREE Error during parsing of command line. root@roger-wilco:~# lvresize -l +100%FREE vg0/spindle Unable to resize logical volumes of cache type.

(ER.?!)

root@roger-wilco:~# lvremove vg0/spindle Do you really want to remove active logical volume spindle? [y/n]: y /usr/sbin/cache_check: execvp failed: No such file or directory WARNING: Integrity check of metadata for pool vg0/cache failed. Logical volume "spindle" successfully removed root@roger-wilco:~# vgs VG #PV #LV #SN Attr VSize VFree roger-wilco-vg 1 3 0 wz--n- 114.80g 0 vg0 2 2 0 wz--n- 1.02t 931.39g root@roger-wilco:~# lvcreate -L 930G -n spindle vg0 /dev/sdb1 Logical volume "spindle" created root@roger-wilco:~# lvconvert --type cache --cachepool vg0/cache vg0/spindle Logical volume vg0/spindle is now cached. root@roger-wilco:~# mkfs.ext4 /dev/mapper/vg0-spindle mke2fs 1.42.12 (29-Aug-2014) /dev/mapper/vg0-spindle contains a ext4 file system last mounted on /mnt/cached on Wed Apr 6 11:54:51 2016 Proceed anyway? (y,n) n

(OK...)

root@roger-wilco:~# mount /dev/mapper/vg0-spindle /mnt/cached/

attempt 2

For the oncoming downscaling of the server infra, we needed a new target. The question of SSD cache came up, but was discarded as not-for-production-yet. Never the less, i tested it's performance;

setup

- Intel Pentium J4205 ( Asrock J4205-ITX ), 16GB 1600Mhz DDR3

- HDD; 1TB Toshiba MQ01ABD100 5400rpm, 8MB cache

- SDD; 120GB Kingston SUV400S37120G

Disk /dev/sda: 931.5 GiB, 1000204886016 bytes, 1953525168 sectors Units: sectors of 1 * 512 = 512 bytes Sector size (logical/physical): 512 bytes / 4096 bytes I/O size (minimum/optimal): 4096 bytes / 4096 bytes Disklabel type: gpt Disk identifier: 28FE43B1-093A-4350-8E02-E3383C59A994 Device Start End Sectors Size Type /dev/sda1 2048 4095 2048 1M BIOS boot /dev/sda2 4096 528383 524288 256M EFI System /dev/sda3 528384 125528063 124999680 59.6G Linux filesystem /dev/sda4 125528064 188028927 62500864 29.8G Linux swap /dev/sda5 188028928 1953525134 1765496207 841.9G Linux filesystem

Only /dev/sda5 is used to test.

Plain disk results

(all results 64 users, 8K blocks for comparison)

- randread, 2.909MB/s, 170.255ms, 372 IOps

- randrw, 0.476MB/s, 657.810ms, 60 IOps

- read, 36.373MB/s, 13.671ms, 4655 IOps

- write, 7.488MB/s, 62.896ms, 958 IOps

Full results here ; SSD Acceleration/Toshiba MQ01ABD100

bcache results

I setup the full ssd (/dev/sdb) as cache device for the backingstore (/dev/sda5). I also enable writeback and discard by default.

apt install bcache-tools make-bcache -C /dev/sdb -B /dev/sda5 --discard --writeback mkfs.ext4 /dev/bcache0

(all results 64 users, 8K blocks for comparison)

- randread, 177.577MB/s, 2.505ms, 22729 IOps

- randrw, 10.483MB/s, 31.560ms, 1341 IOps

- read, 162.114MB/s, 2.716ms, 20750 IOps

- write, 35.676MB/s, 13.936ms, 4566 IOps

and with writethrough ;

- randread, 160.338MB/s, 2.795ms, 20523 IOps

- randrw, 1.302MB/s, 226.778ms, 166 IOps

- read, 146.139MB/s, 3.089ms, 18705 IOps

- write, 7.118MB/s, 63.971ms, 911 IOps

Full results here ; SSD Acceleration/Toshiba MQ01ABD100 with bcache

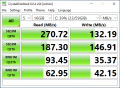

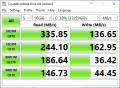

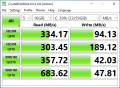

attempt 3

This setup is the current running erratic setup.

These tests came from crystaldiskmark 8.0.4 on a kvm running on the machine with disk caching set to 'default(no cache)'.